When ChatGPT launched in late 2022, it quickly became popular. Within weeks, millions of people were using it to write content, generate code, and ask questions. It felt sudden, but it was the result of years of research and steady progress. What made the difference was turning powerful technology into a simple product that anyone could use.

But behind this moment was one major technical breakthrough: the Transformer architecture.

Key Takeaways

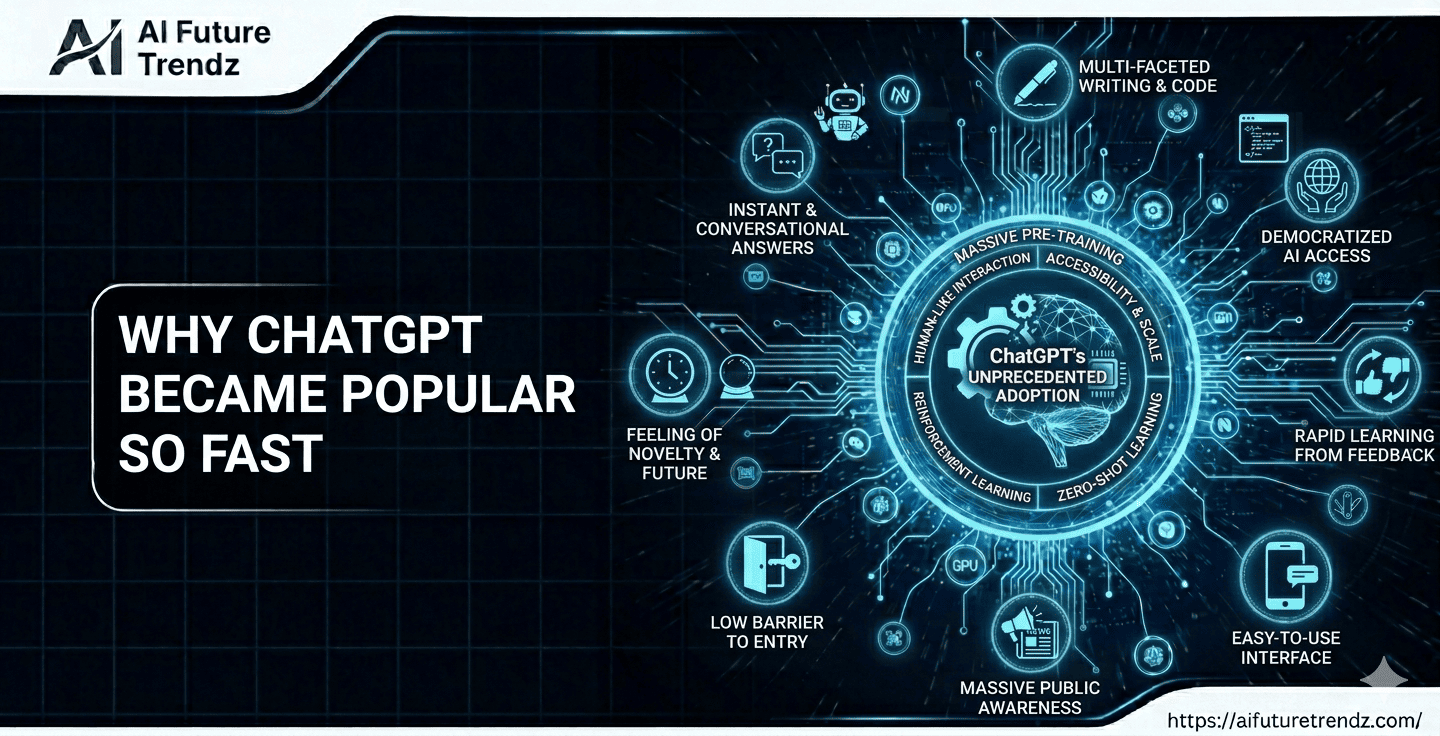

- ChatGPT’s rapid popularity was the result of years of research in large language models and Transformer-based architectures.

- The 2017 research paper "Attention Is All You Need" introduced the Transformer architecture, which became the foundation for modern AI systems.

- Transformers improved how AI understands context by using self-attention, allowing models to process entire sentences simultaneously.

- OpenAI scaled Transformer models aggressively, training large systems like GPT-3 using massive datasets and cloud infrastructure.

- The simple chat interface of ChatGPT made advanced AI accessible to everyday users without technical knowledge.

- ChatGPT’s success triggered a global AI race, pushing companies like Google, Meta, Anthropic, and Microsoft to accelerate their AI development.

The Foundation: The Transformer Breakthrough

In 2017, Google published a research paper called "Attention Is All You Need". They were trying to improve translation systems because RNN models were slow and struggled with long sentences. From that work came a new architecture — The Transformer. At the time, it felt like just another research breakthrough. No one imagined it would spark an AI revolution.

Before Transformers, models processed text sequentially - word by word. This made it harder to understand long sentences and long-range context. The Transformer introduced a mechanism called self-attention, allowing models to look at all words at once and understand relationships between them more effectively.

This made models faster to train, easier to scale, and significantly better at understanding context. Almost every major modern AI model - including ChatGPT, Gemini, Claude, and LLaMA - is built on Transformer-based foundations.

The Friendly Race: Google, OpenAI, and Others

In the late 2010s, multiple companies were advancing artificial intelligence. Google introduced the Transformer in 2017 and later released BERT in 2018, improving language understanding.

Meanwhile, OpenAI had started in 2015 as a small research lab. They didn't have Google-level data centers or custom TPUs, and they didn't even have a strong business model. But they had one bold idea: What if we scale this Transformer massively and train it on the entire internet?

In 2019, Microsoft invested $1B and provided massive Azure infrastructure. That changed everything. OpenAI built GPT-3 and in 2022 they released ChatGPT. Within days, millions were using it. For the first time, AI wasn't just inside products - it became the product.

Google already had powerful models like BERT and LaMDA, but they were cautious about reputation, wrong answers, and search revenue risks. OpenAI took the risk. That single move triggered a global AI race - Google pushed Bard (later Gemini), Meta released LLaMA, Anthropic launched Claude, and Microsoft integrated AI everywhere.

Why 2022 Was the Turning Point

Three major things came together around 2022–2023.

1. Mature Transformer Technology

The Transformer architecture had been tested and refined for years. Researchers understood how to train it efficiently at massive scale. The foundation was ready.

2. Bigger and Better Models

Models were trained on enormous datasets using massive compute. As they scaled up, they began showing new abilities like writing, coding, reasoning, and answering complex questions. These capabilities emerged because Transformer-based models scale extremely well.

3. Simple Chat Interface

The biggest change was putting all this power behind a clean chat box. No setup. No technical skills required. Anyone could type a question and get an answer instantly.

Why OpenAI Reached the Public First

Public-First Release Strategy

Instead of limiting access to researchers or enterprise clients, OpenAI released ChatGPT directly to the public as a free web product. Anyone could sign up and start using it immediately. That decision created instant visibility and viral adoption.

Faster Product Iteration

By launching early, OpenAI collected real user feedback at massive scale. This allowed rapid improvements in safety, usefulness, and conversational quality. The product improved week by week based on real-world usage.

Willingness to Take Controlled Risk

Releasing a conversational AI system to millions of users carries reputational risk. Large companies often move cautiously to protect existing businesses and brand trust. OpenAI moved faster, accepting early imperfections while continuing to improve the system.

The “Code Red” Moment

ChatGPT’s rapid growth triggered urgency across the tech industry. Companies accelerated their AI efforts to compete in this new phase of innovation.

Conclusion

ChatGPT did not become popular only because of advanced AI models. It became popular because a powerful Transformer-based technology — refined over years by multiple companies - was packaged into a simple, easy-to-use product and launched at the right time.

FAQ

Why did ChatGPT gain users so fast?

It combined mature Transformer technology with a simple, accessible interface that anyone could use instantly.

Did OpenAI invent the core technology?

No. Google introduced the Transformer architecture in 2017. OpenAI focused on scaling it and turning it into a consumer-ready product.

Why is the Transformer so important?

The Transformer made it possible to train very large language models efficiently. It enabled better context understanding and scaling, which directly led to modern AI systems like ChatGPT.