Many people assume that large IT service companies should be able to build powerful AI models like ChatGPT or Gemini. After all, they have thousands of engineers, global clients, and strong cash flow.

Technically, anyone can build a language model. The Transformer architecture that sparked the generative AI revolution is public. Any organization can follow it and train a model. But building a small model and building a frontier model are very different things. In reality, only a handful of organizations worldwide can operate at that level.

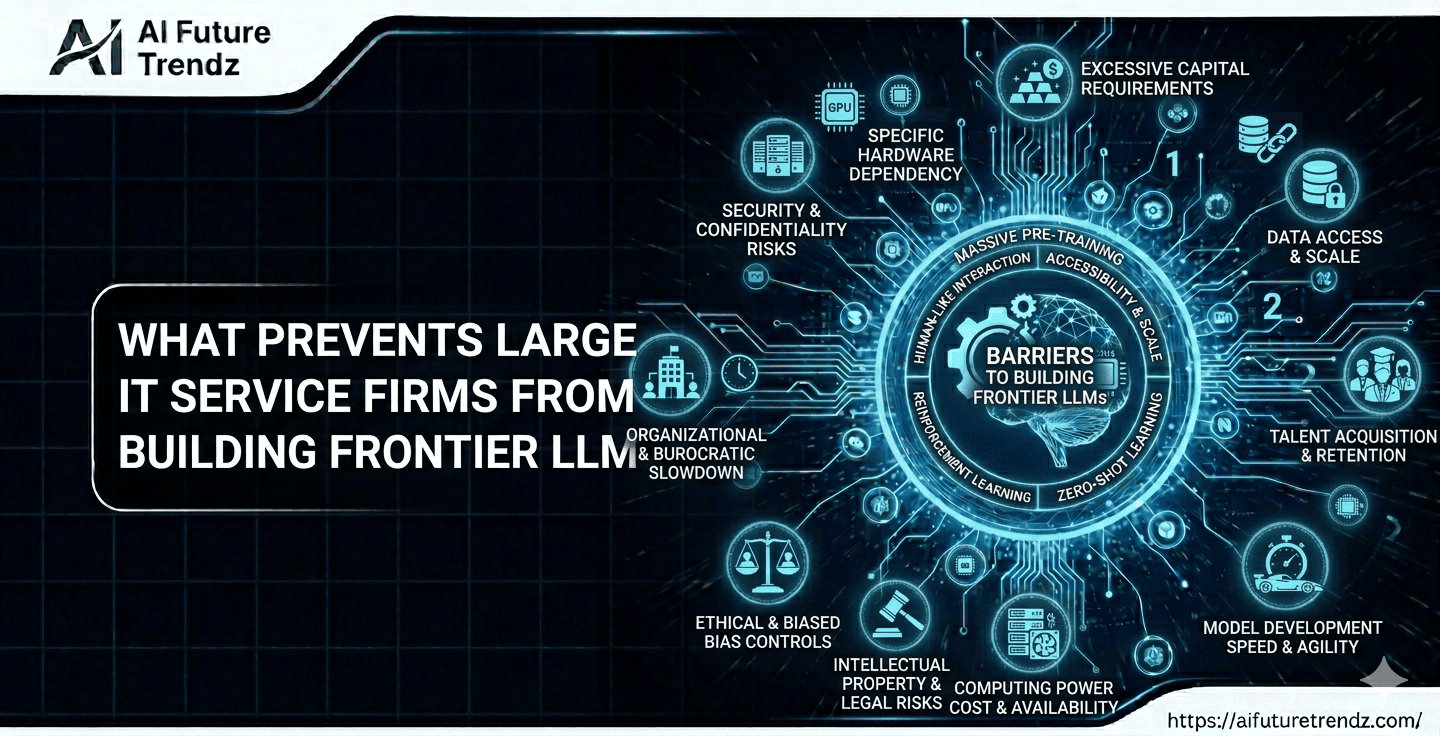

There are four major barriers that stop most companies.

Key Takeaways

- Building frontier large language models requires far more than just engineers or basic AI knowledge.

- Training state-of-the-art models costs hundreds of millions of dollars and requires long-term investment with uncertain returns.

- Frontier AI development depends on massive GPU infrastructure, often involving tens of thousands of specialized chips.

- Access to high-quality training data and large-scale human feedback pipelines is becoming a major competitive advantage.

- Companies like OpenAI and Google succeeded because they combine capital, infrastructure, research talent, and product-focused strategy.

- Many companies are instead adopting an open-source strategy by fine-tuning base models like LLaMA for specific industries.

The Four Big Walls Blocking New Players

1. The Financial Wall

Training a state-of-the-art model costs hundreds of millions of dollars. Running it at global scale adds even more cost. You need long-term funding with no guaranteed return.

Most IT service firms are built around predictable project revenue. Frontier AI research requires open-ended spending for years.

2. The Hardware Wall

Modern AI models need tens of thousands of specialized GPUs like NVIDIA H100. These chips are expensive and limited in supply. Only hyperscalers with deep pockets and strong supplier relationships can secure this level of hardware.

3. The Data Wall

Most public internet text has already been used for training. Improving models now requires high-quality proprietary data or expert human labeling. This kind of data is costly and difficult to gather at scale.

4. The Talent Wall

Only a small number of researchers worldwide have experience training models at this scale. Hiring them is extremely competitive and expensive.

Why Companies Like OpenAI and Google Could Do It

Companies like OpenAI and Google were positioned differently. They had structural advantages that made building frontier models possible:

- Deep Capital Commitment: Training frontier LLMs requires billions in compute, research, and experimentation — often years before meaningful revenue. These companies were willing to invest long-term with high risk tolerance.

- Massive Compute Infrastructure: Frontier models demand enormous GPU clusters and large-scale cloud systems. Google already operated global data centers, and OpenAI partnered with Microsoft to access Azure’s hyperscale infrastructure.

- Product-First Strategy: For them, AI was not just a feature for clients — it was the core product. Their teams were organized around research, model scaling, safety, and global deployment.

- Access to Data and Research Ecosystems: Google had decades of search, web, and user interaction data whereas OpenAI invested heavily in large-scale human feedback pipelines and safety research.

Large IT service firms, on the other hand, are optimized for client delivery, customization, and predictable service revenue. Frontier AI labs are optimized for long-term research bets and global product launches. These are fundamentally different operating models — and that difference matters.

The Middle Path: Open-Source Strategy

Many companies choose a smarter alternative. Instead of building a massive model from scratch, they use open-base models like LLaMA and fine-tune them for specific industries.

Companies can fine-tune models on country-level or industry-level data to create highly relevant systems without competing in the global frontier arms race. This approach is far cheaper and still delivers powerful AI solutions. It allows companies to focus on integration, domain knowledge, and enterprise deployment.

The future of AI will not be controlled by just a few frontier labs. There will be space for regional models, specialized systems, and strong enterprise execution.

FAQ

Can any company build a large language model?

Yes, the architecture is public. But building a frontier model requires massive funding, GPUs, data, and expert talent.

Why don’t IT service firms invest billions like tech giants?

Their business model focuses on steady project revenue and margins, not long-term research spending with uncertain outcomes.

What is the smart strategy for most companies?

Use open-source base models, fine-tune them for specific industries, and focus on integration and real-world deployment.