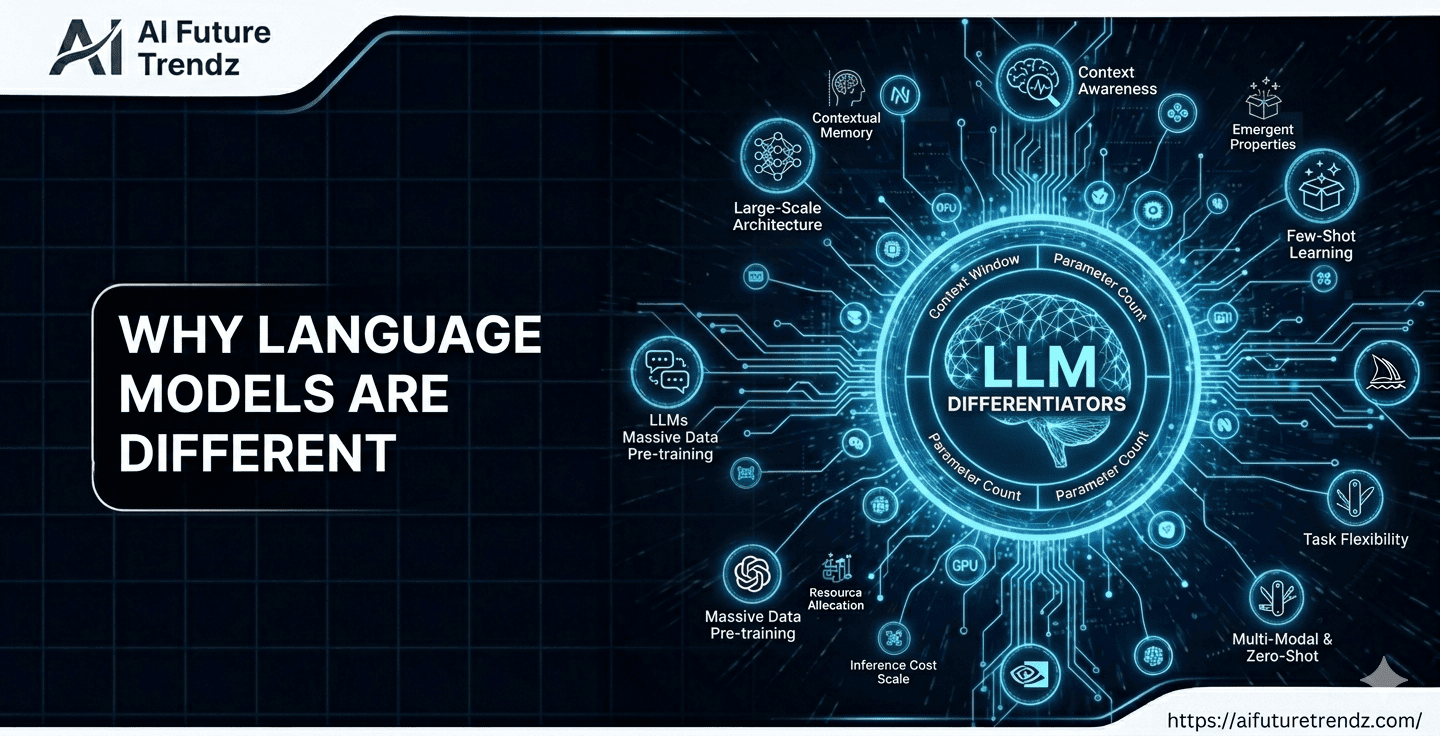

Today, there are many different large language models available. At a surface level, they all seem similar. You ask a question. They generate text. But in practice, models differ significantly. Some are better at reasoning. Some are better at coding. Some are cheaper. Some are faster. Some handle very long documents.

At a high level, all of them are trained to do the same core task: predict the next token in a sequence of text. Yet in practice, they behave very differently. The reason lies in how they are built, trained, and optimized.

Key Factors That Differentiate LLMs

- Model size (parameters)

- Training data quality

- Fine-tuning approach

- Context window length

- Hardware requirements

- Optimization goals (speed vs reasoning)

Let's look at these factors in more detail.

Key Takeaways

- Large language models differ in size, training data, and optimization strategies.

- Larger models generally perform better at complex reasoning but are slower and more expensive.

- Training data quality strongly influences what a model is good at, such as coding or conversation.

- The context window determines how much text a model can process at once.

- Different models are optimized for different use cases like research, chatbots, coding, or enterprise automation.

Model Size (Number of Parameters)

Language models contain parameters, which are internal weights adjusted during training. These weights determine how the model processes information. A larger model contains more parameters, allowing it to capture more complex patterns in language.

- Frontier Models (hundreds of billions to trillions of parameters): These are the heavyweights like GPT-5 and Gemini 3 Ultra and are used for breakthrough scientific research or complex autonomous agents.

- Mid-Range Models (70B – 400B Parameters): Models like Llama 4 or Claude 4 Sonnet are still very powerful but smaller than frontier models. They are excellent at coding, problem-solving, writing, and understanding complex ideas.

- Small Language Models (SLMs) (<20B Parameters): Models like Mistral 7B or Phi-4 are designed to be fast and efficient. They can run on devices like laptops, phones, or edge systems with limited hardware. While they are smaller, they often perform very well on specific, focused tasks because they are trained using high-quality and carefully selected data.

In general, larger models tend to perform better on reasoning-heavy tasks, complex instructions, and multi-step problems. However, they also require more computing power to run. This increases cost and often reduces speed.

Training Data

Not all models are trained on the same type of content. Some are trained heavily on programming data. Others focus more on conversational text. Some include more scientific material. Some include more multilingual content.

The type and quality of training data strongly influence what the model does well. A model trained extensively on code will usually perform better in coding tasks. A model trained more on dialogue data may sound more natural in conversations.

Fine-Tuning

After initial training, many models go through additional training using human feedback. This step improves safety, instruction-following ability, and response quality. Two models with similar size can feel very different because of how they were fine-tuned.

Context Window

Language models process text in units called tokens. A token is not exactly a word. It may be a full word, part of a word, or a symbol. When you send text to a model, it converts that text into tokens.

The context window defines how many tokens the model can handle at once. A larger context window allows the model to read longer documents or maintain longer conversations. However, larger context windows require more memory and computation, which increases cost.

Cost

Larger models require more powerful hardware, often multiple high-end GPUs. Running these systems continuously is expensive. In addition, companies need to recover the cost of training, which may involve months of compute on massive computing clusters.

When you use an API, you are usually charged per token. You pay for the tokens in your input and the tokens generated in the output. Longer prompts and longer responses mean higher cost. More advanced models typically charge more per token because they require more compute per request.

| Model Tier | Average Input Cost (per 1M tokens) | Average Output Cost (per 1M tokens) | Best Use Case |

|---|---|---|---|

| Frontier (e.g., GPT-5 Pro) | $15.00 - $20.00 | $60.00 - $160.00 | High-stakes reasoning, novel discovery |

| Mid-Range (e.g., Claude 4 Sonnet) | $3.00 | $15.00 | Enterprise automation, coding |

| Efficient (e.g., Gemini 2.5 Flash) | $0.10 | $0.30 | High-volume chatbots, translation |

Performance and Optimization

Performance varies depending on optimization goals. Some models are optimized for speed. These models respond quickly but may provide slightly less detailed reasoning. Others are optimized for depth and accuracy, resulting in slower responses but stronger outputs.

Examples of Well-Known Models

OpenAI’s GPT models are widely used in applications that require strong reasoning and balanced performance across tasks. They are often chosen for production systems where reliability matters.

Google’s Gemini models focus heavily on multimodal capabilities and integration within Google’s ecosystem. Some versions support very large context windows.

Anthropic’s Claude models emphasize safety and long-context understanding, making them popular for analyzing large documents.

Meta’s LLaMA models are open-source and commonly used by researchers and startups who want to fine-tune or self-host their own systems.

| Model | Strength | Primary Use Case | Context Window |

|---|---|---|---|

| GPT-4o | Fast Reasoning & Multimodal | Production Apps & AI Assistants | Standard (128k) |

| GPT-4 Turbo | Structured Logic & Coding | SaaS Products & Complex Workflows | Standard (128k) |

| Claude 3.5 Sonnet | Balanced Performance & Safety | Business Automation & Writing | Large (200k) |

| Claude 3 Opus | Deep Reasoning & Long-Context Stability | Research, Legal & Enterprise Docs | Large (200k) |

| Claude Opus 4.5 / 4.6 | Frontier-Level Reasoning & Agentic Tasks | Enterprise AI Agents & Complex Systems | Large+ (200k+) |

| Gemini 1.5 Pro | Ultra-Large Context & Multimodality | Massive File & Video Analysis | Ultra-Large (1M+) |

| LLaMA 3 (70B) | Open-Source & Customizable | Self-hosting & Fine-tuning | Variable (8k–32k) |

The key point is that there is no single “best” language model. The best model depends on the use case. A small and fast model may be ideal for a lightweight chatbot. A larger model may be necessary for advanced reasoning or complex workflows. A coding-focused model may outperform a general model for software tasks.

Understanding these differences helps users make informed decisions. Instead of choosing a model based on popularity, it becomes possible to choose based on performance, cost, and specific needs.

All modern language models are built on similar principles. What separates them is how those principles are scaled, trained, and deployed.